Articulated Part-based Model for Joint Object Detection and Pose Estimationa

|

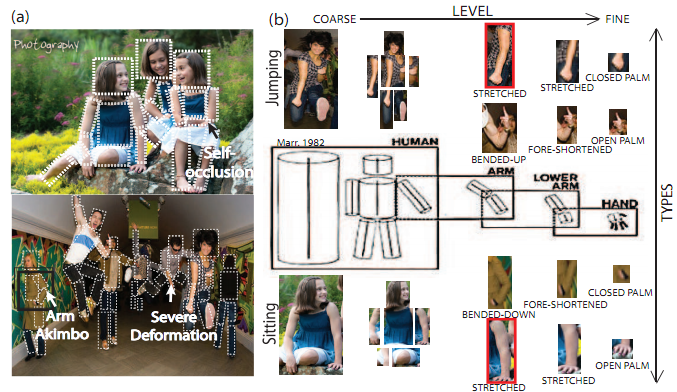

| Panel (a) shows large appearance variation and part deformation of articulated objects (people) with different poses (sitting, standing, and jumping, etc). (b) we propose a new model for jointly detecting objects and estimating their pose. Inspired by Marr[14], our model recursively represents the object as a collection of parts from a coarse-to-.ne level (e.g., see horizontal dimension) using a parent-child relationship with multiple part-types (e.g., see vertical dimension). We argue that our representation is suitable for "taming" such pose and appearance variability. |

- Min Sunand Silvio Savarese, "Articulated Part-based Model for Joint Object Detection and Pose Estimation" (pdf).

Despite recent successes, pose estimators are still somewhat fragile, and they frequently rely on a precise knowledge of the location of the object. Unfortunately, articulated objects are also very dif.cult to detect. Knowledge about the articulated nature of these objects, however, can substantially contribute to the task of .nding them in an image. It is somewhat surprising, that these two tasks are usually treated entirely separately. In this paper, we propose an Articulated Part-based Model (APM) for jointly detecting objects and estimating their poses. APM recursively represents an object as a collection of parts at multiple levels of detail, from coarse-to-.ne, where parts at every level are connected to a coarser level through a parentchild relationship (Fig. 1(b)-Horizontal). Parts are further grouped into part-types (e.g., left-facing head, long stretching arm, etc) so as to model appearance variations (Fig. 1(b)-Vertical). By having the ability to share appearance models of part types and by decomposing complex poses into parent-child pairwise relationships, APM strikes a good balance between model complexity and model richness. Extensive quantitative and qualitative experiment results on public datasets show that APM outperforms stateof-the-art methods. We also show results on PASCAL 2007 - cats and dogs - two highly challenging articulated object categories. Please see the paper for more details. The poster presented in ECCV is available as well.

Result Video (Adobe Flash is required)

Bibtex

@InProceedings{MinSun_iccv_2011,

author = {Min Sun and Silvio Savarese},

title = {Articulated Part-based Model for Joint Object Detection and Pose Estimation},

booktitle = {ICCV},

year = {2011},

}

Contact : wgchoi at umich dot edu